The Cityscapes Dataset

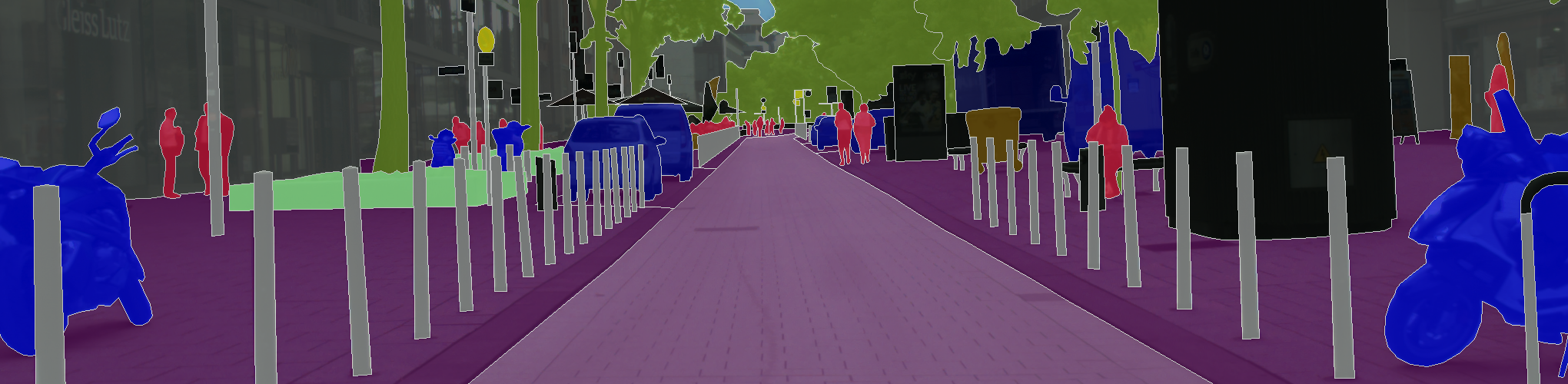

We present a new large-scale dataset that contains a diverse set of stereo video sequences recorded in street scenes from 50 different cities, with high quality pixel-level annotations of 5 000 frames in addition to a larger set of 20 000 weakly annotated frames. The dataset is thus an order of magnitude larger than similar previous attempts. Details on annotated classes and examples of our annotations are available at this webpage.

The Cityscapes Dataset is intended for

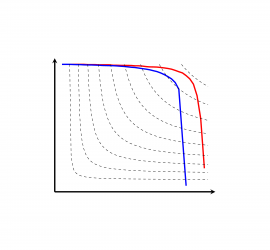

- assessing the performance of vision algorithms for major tasks of semantic urban scene understanding: pixel-level, instance-level, and panoptic semantic labeling;

- supporting research that aims to exploit large volumes of (weakly) annotated data, e.g. for training deep neural networks.

Latest News

- Cityscapes 3D Benchmark Online

Cityscapes 3D is an extension of the original Cityscapes with 3D bounding box annotations for all types of vehicles as well as a benchmark for the 3D detection task. For more details please refer to our paper, presented at the CVPR 2020 Workshop on Scalability in Autonomous Driving. Today, we extended our benchmark and evaluation server to include the 3D vehicle detection task. In order to train and evaluate your method, checkout our toolbox on Github, which can be installed using pip, i.e.python -m pip install cityscapesscripts[gui]. In order to visualize the 3D Boxes, run csViewer and select the CS3D… Read more: Cityscapes 3D Benchmark Online

Cityscapes 3D is an extension of the original Cityscapes with 3D bounding box annotations for all types of vehicles as well as a benchmark for the 3D detection task. For more details please refer to our paper, presented at the CVPR 2020 Workshop on Scalability in Autonomous Driving. Today, we extended our benchmark and evaluation server to include the 3D vehicle detection task. In order to train and evaluate your method, checkout our toolbox on Github, which can be installed using pip, i.e.python -m pip install cityscapesscripts[gui]. In order to visualize the 3D Boxes, run csViewer and select the CS3D… Read more: Cityscapes 3D Benchmark Online

License

This Cityscapes Dataset is made freely available to academic and non-academic entities for non-commercial purposes such as academic research, teaching, scientific publications, or personal experimentation. Permission is granted to use the data given that you agree to our license terms.